Maeesha Binte Hashem

PhD Student @ University of Minnesota

I am a first-year Ph.D. student in Electrical and Computer Engineering at University of Minnesota Twin Cities. I completed my Master’s in Electrical and Computer Engineering at University of Illinois Chicago, and my Bachelor’s in Electrical and Electronic Engineering at Bangladesh University of Engineering and Technology (BUET).

During my Master’s, I had the opportunity to complete a co-op internship at Intel in Fall 2024. Prior to that, I worked as a Physical Design Engineer at Neural Semiconductor in Dhaka, Bangladesh after completing my undergraduate studies.

Research

My research focuses on evaluating and optimizing computer architectures for emerging workloads. I am broadly interested in Machine Learning, Compilers, and VLSI Design.

TimeFloats: Train-in-Memory with Time-Domain

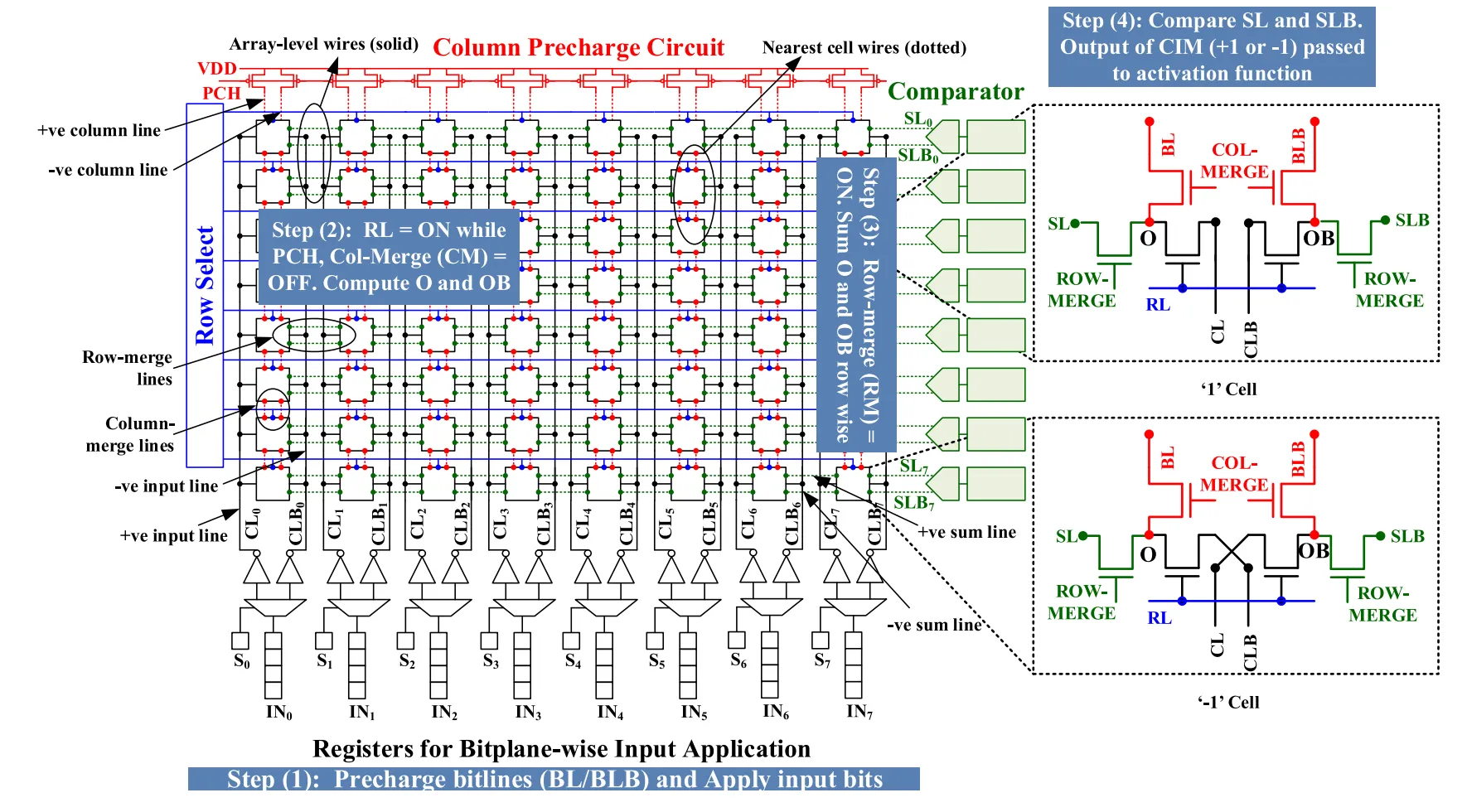

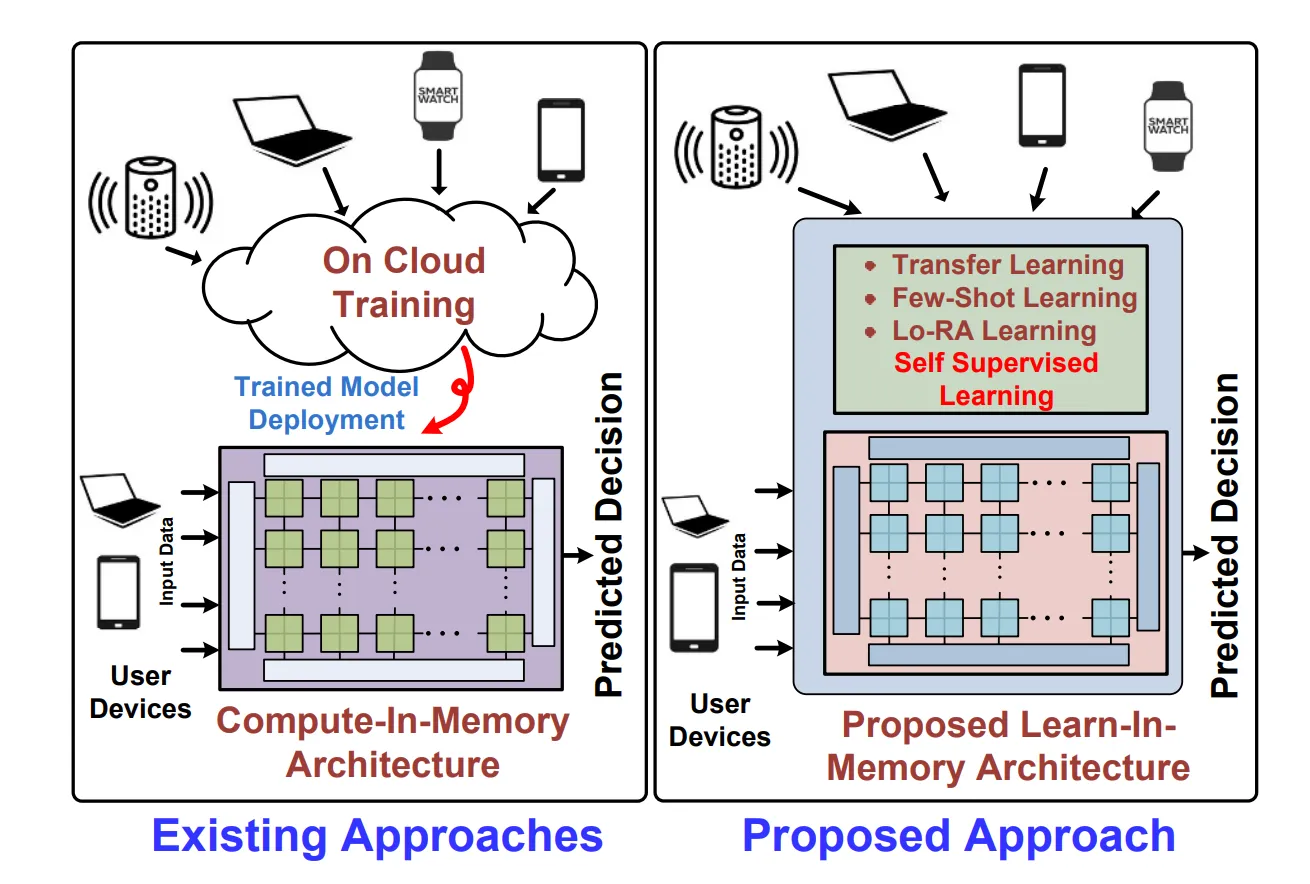

In this work, we propose “TimeFloats,” an efficient train-in-memory architecture that performs 8-bit floating-point scalar product operations in the time domain. While building on the compute-in-memory paradigm’s integrated storage and inferential computations, TimeFloats additionally enables floating-point computations, thus facilitating DNN training within the same memory structures. Traditional compute-in-memory approaches face challenges such as higher power consumption and increased design complexity; in contrast, TimeFloats leverages time-domain signal processing to avoid conventional domain converters, reducing power consumption and noise sensitivity while enabling high-resolution computations.

Towards Model-Size Agnostic, Compute-Free, Memorization-based Inference of Deep Learning

This paper proposes a novel memorization-based inference (MBI) that is compute-free and only requires lookups. Specifically, our work capitalizes on the inference mechanism of the recurrent attention model (RAM), where only a small window of input domain (glimpse) is processed in a one-time step, and the outputs from multiple glimpses are combined through a hidden vector to determine the overall classification output of the problem. By leveraging the low-dimensionality of glimpse, our inference procedure stores key-value pairs comprising of glimpse location, patch vector, etc. in a table. The computations are obviated during inference by utilizing the table to read out key-value pairs and performing compute-free inference by memorization.

Experience

My professional journey.

System Architect and Design Engineer Intern

Designed a hardware cost model framework to evaluate and explore design space for fully digital compute-in-memory macros.

Graduate Teaching Assistant

- Conducted lab sessions, graded exams and projects, proctored exams, and held regular office hours to support undergraduate instruction in core ECE courses.

- Assisted in delivering course content and supporting student understanding in both theoretical and hands-on lab environments.

Courses Supported: ECE 467 – Introduction to VLSI Design, ECE 266 – Introduction to Embedded Systems, ECE 317 – Digital Signal Processing, ECE 366 – Computer Organization, and ECE 265 – Introduction to Logic Design.

Physical Design Engineer

- Led full-chip physical design (netlist to GDSII) for a 40nm AI chip with over 500,000 instances, executing key implementation stages including floorplanning, placement, clock tree synthesis (CTS), routing, and sign-off (DRC, LVS, LEC, ERC).

- Performed RTL-to-GDSII physical implementation on multiple 45nm in-house blocks, focusing on timing closure and power optimization.

- Developed automation scripts using TCL, Bash, and Python for IP block and pin placement, LEF/DEF/Lib file generation, and tool output parsing and flow integration.

- Customized and extended YAML-based design automation flows for reusable IP integration.

- Completed Cadence-certified training on Genus Synthesis Solution and Innovus Implementation System.

- Participated in fabless semiconductor manufacturing training, gaining cross-functional flow awareness.

Education

My academic background.

PhD in Electrical and Computer Engineering

University of Minnesota Twin Cities

GPA: 3.83/4.00

Core Coursework: EE 8310: Advanced Topics in VLSI, EE 5340: Introduction to Quantum Computing and Physical Basics of Computing, CSCI 5523: Introduction to Data Mining, EE 5324: VLSI Design II, and EE 5393: Circuits, Computation, and Biology.

MSc in Electrical and Computer Engineering

University of Illinois Chicago

GPA: 3.59/4.0

Core Coursework: CS 401 (Computer Algorithms I), ECE 407 (Pattern Recognition I), ECE 465 (Digital Systems Design), ECE 466 (Advanced Computer Architecture), ECE 467 (Introduction to VLSI Design), ECE 491 (Introduction to Neural Networks), ECE 540 (Physics of Semiconductor Devices), ECE 559 (Neural Networks), ECE 566 (Parallel Processing), ECE 567 (Advanced VLSI Design), ECE 568 (Advanced Micro Architecture), and ECE 569 (High-Performance Process & Systems).

MSc Thesis: Exploring Time-Domain Floating-Point Computation for Neural Network Training in Compute-in-Memory Systems

B.Sc in Electrical and Electronic Engineering

Bangladesh University of Engineering and Technology

Major: Electronics | Minor: Power

GPA: 3.48/4.00

Core Coursework: Electrical Circuits, Electronic Circuit Simulation, Electronics, Electromagnetics, Numerical Technique, Continuous Signals and Linear Systems, Digital Logic Design, Energy Conversion, Power System, Power Electronics, Electrical Properties of Materials, Electrical Services Design, Microprocessor and Interfacing, Analog Integrated Circuit, Control System, Solid State Device, Compound Semiconductor and Hetero Junction Devices, VLSI, Optoelectronics, and Semiconductor Device Theory.

B.Sc. Thesis: Robust Spin Electron Transport in the Presence of Magnetic Field in Square Lattice: Explored spin-resolved electron transport properties in two-dimensional square lattice systems under external magnetic fields. Analyzed robustness and interference effects using quantum transport theory and simulation tools.

Skills & Expertise

Tools, technologies, and methodologies I specialize in.

Languages

- English

- Bangla

- Hindi

Programming Languages

- Python

- C/C++

- Verilog

- SystemVerilog

- MATLAB

Machine Learning

- Machine Learning

- Deep Learning

- Pytorch

- Tensorflow

Scripting Languages

- TCL

- Perl

- Bash

- Shell

EDA Design Tools

- Cadence Genus

- Innovus

- Virtuoso Layout

- Virtuoso Schematic

- Spectre

- HSPICE

Parallel Programming

- CUDA

- Pthread

- OpenMP

Publications

Research contributions and papers.

TimeFloats: Train-in-Memory with Time-Domain Floating-Point Scalar Products ↗

Maeesha Binte Hashem, Benjamin Parpilon, Divake Kumar, Dinithi Jayasuria, Amit Ranjan Trivedi

International Conference VLSI Design (VLSID), Kolkata, India

Single-Step Extraction of Transformer Attention with Dual-Gated Memtransistor Crossbars ↗

Nethmi Jayasinghe, Maeesha Binte Hashem, Dinithi Jayasuriya, Leila Rahimifard, Min-A Kang, Vinod K. Sangwan, Mark C. Hersam, Amit Ranjan Trivedi

IEEE Electron Device Letters (EDL)

Towards Model-Size Agnostic, Compute-Free, Memorization-based Inference of Deep Learning ↗

Davide Giacomini, Maeesha Binte Hashem, Jeremiah Suarez, Swarup Bhunia, Amit Ranjan Trivedi

International Conference VLSI Design (VLSID), Kolkata, India

ADC/DAC Free Analog Acceleration of Deep Neural Networks with Frequency Transformation ↗

Nastaran Darabi, Maeesha Binte Hashem, Hongyi Pan, Ahmet Cetin, Wilfred Gomes, Amit Ranjan Trivedi

IEEE Transactions on VLSI Systems (TVLSI)

Exploiting Programmable Dipole Interaction in Straintronic Nanomagnet Chains for Ising Problems ↗

Amit Ranjan Trivedi, Nastaran Darabi, Maeesha Binte Hashem, Supriyo Bandyopadhyay

International Symposium on Quality Electronic Design (ISQED), San Francisco, CA, USA

Memory-Immersed Collaborative Digitization for Area-Efficient Compute-in-Memory Deep Learning ↗

Shamma Nasrin, Maeesha Binte Hashem, Nastaran Darabi, Benjamin Parpilon, Farah Fahim, Wilfred Gomes, Amit Ranjan Trivedi

IEEE International Conference on Artificial Intelligence Circuits and Systems (AICAS), Hangzhou, China

Solving Boolean Satisfiability with Stochastic Nanomagnets ↗

Maeesha Binte Hashem, Nastaran Darabi, Supriyo Bandyopadhyay, Amit Ranjan Trivedi

International Conference on Electronics Circuit and Systems (ICECS), Glasgow, UK

Hardware Trojan Detection Using Slope of Path Delay Trend: Combination of Clock and DC Sweep ↗

Mahmudul Hasan, Sudipto Baul, Maeesha Binte Hashem, Hamidur Rahman

International Conference on Electrical and Computer Engineering (ICECE), Dhaka, Bangladesh

Unmanned Floating Waste Collecting Robot ↗

Abir Akib, Faiza Tasnim, Disha Biswas, Maeesha Binte Hashem, Kristi Rahman, Arnab Bhattacharjee, Sheikh Anowarul Fattah

IEEE Region 10 International Conference (TENCON), Kochi, India

Academic Projects

Key projects and implementations.

Wildfire Image Classification

Built a neural network model to classify wildfire imagery, focusing on feature learning, training accuracy, and performance evaluation.

Parallel 2D Convolution with MPI

Developed and benchmarked 2D FFT-based image convolution using SPMD and task/data-parallel models on 1 to 16 processors, evaluating computation and communication performance using MPI.

Multiplication and Accumulation (MAC) Datapath for Neural Networks

Platform: Cadence Virtuoso

Inverter Chain, Adder, Multiplier, Register and SRAM circuits are designed and incorporated together. LVS and DRC is check for full circuit.

Design of a standardized Bus Interface Encryption

Platform: Intel Quartus Prime, ModelSim

A simpler version of Avalon interface is designed in Quartus and analyzed the functionality in ModelSim.

32-bit MIPS Processor Without Pipe-lining

Platform: Cadence (NC-Sim, Sim Vision, Genus, Innovus.)

RTL coding of the processor using Verilog, Verification using Test bench, Synthesis, Floor planning, Placement, Clock Tree Synthesis, Routing, Physical verification is done (final output is in GDS II)

Hardware Trojan Detection Using The Delay Detection Method

Platform: Cadence (NC-Sim, Sim Vision and Genus)

RTL coding of test circuit using Verilog, Verification using Test bench.

Synthesis and Analysis of HDL Design of Different Logic Circuits

Platform: Intel Quartus Prime

Compiled HDL designs, performed timing Analysis, examined RTL diagrams on simulating design’s reaction to different stimuli.

Voice Controlled Password Unlock System

Platform: MATLAB

Simulation of a password unlock system using Voice Input.

Microcontroller-based Visible Light Through ASCII Encoding and Data Encryption

Platform: Arduino based hardware project

Done as a Communication Lab project.

Microcontroller-based Water-bot Prototype

Platform: Hardware based control System project

Using Arduino Mega (ATmega1280), Bluetooth-module, Servomotor, Buck-Converter, Motor driver, Lithium Battery as Control system Lab Project.

Pulse Rate Monitoring System Using PPG Sensor

Platform: IC package-based hardware project

Using TLC555 timer, Low pass Filter, High pass Filter, 7-Segment Display.

Beyond Academia

My hobbies and other interests.

Let's Connect

I'm always open to discussing research, collaboration opportunities, or just having a chat.